Mathematical Framework Enhances AI Agent Coordination in Real-World Applications

A new mathematical model optimizes large language models in multi-agent systems by balancing output brevity and contextual relevance.

Researchers have developed a rigorous mathematical framework, dubbed the L Function, to enhance the efficiency and contextual awareness of Large Language Models (LLMs) in Multi-Agent Systems (MAS). This innovation addresses the ad hoc integration of LLMs in dynamic environments like finance, healthcare, and autonomous robotics.

The L Function: A Unified Approach

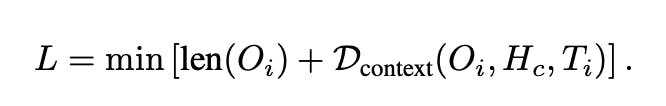

The L Function is defined as:

LaTeX Notation: L = \min \left[\text{len}({O}{i}) + \mathcal{D}{\text{context}}({O}{i}, {H}{c}, {T}_{i})\right]

Key components:

- len(O): Length of the generated output.

- D_context(O, H, T): Contextual deviation, balancing task alignment, historical coherence, and system dynamics.

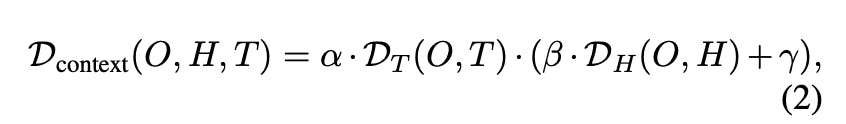

Contextual Deviation Breakdown

LaTeX Notation: \mathcal{D}{\text{context}}(O, H, T) = \alpha \cdot \mathcal{D}{T}(O, T) \cdot (\beta \cdot \mathcal{D}_{H}(O, H) + \gamma)

- D_T(O, T): Task-specific deviation.

- D_H(O, H): Historical deviation, measured using cosine similarity for its semantic interpretability and computational efficiency.

- α, β, γ: Adjustable parameters for weighting task importance, historical coherence, and robustness.

Practical Applications

- Autonomous Systems: Prioritizes critical tasks like obstacle avoidance in self-driving fleets.

- Healthcare Decision Support: Ensures historical patient data is weighed appropriately in emergency triage systems.

- Customer Support Automation: Dynamically adjusts response verbosity based on ticket priority.

Experimental Validation

- Task-Specific Deviation: Tasks with optimal response lengths yielded minimal L values.

- Historical Context Deviation: Larger context windows increased deviation, highlighting the risk of semantic noise.

- Dynamic λ Scaling: Effectively prioritized high-priority tasks under low-resource conditions.

GitHub Repository: https://github.com/worktif/llm_framework?utm_source=agenthunter.io&utm_medium=news&utm_campaign=newsletter

Related News

OpenAI reveals why AI hallucinations are mathematically inevitable

New research shows AI hallucinations are unfixable for consumer applications due to mathematical and economic constraints

New PING Method Enhances AI Safety by Reducing Harmful Agent Behavior

Researchers developed Prefix INjection Guard (PING) to mitigate unintended harmful behaviors in AI agents fine-tuned for complex tasks, improving safety without compromising performance.

About the Author

Dr. Lisa Kim

AI Ethics Researcher

Leading expert in AI ethics and responsible AI development with 13 years of research experience. Former member of Microsoft AI Ethics Committee, now provides consulting for multiple international AI governance organizations. Regularly contributes AI ethics articles to top-tier journals like Nature and Science.